RANDOM POSTs

-

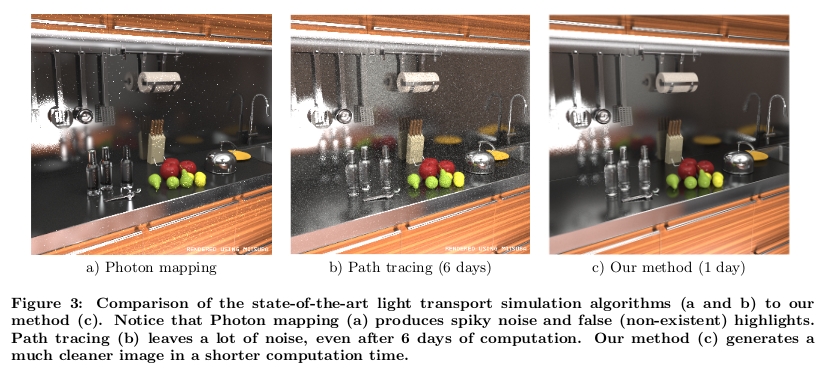

Guided raytracing – Optimal Strategy for Connecting Light Paths in Bidirectional Methods for Global Illumination Computation

Read more: Guided raytracing – Optimal Strategy for Connecting Light Paths in Bidirectional Methods for Global Illumination Computationhttp://acmbulletin.fiit.stuba.sk/vol3num4/vorbaSPY.pdf

http://cgg.mff.cuni.cz/~jirka/papers/2014/olpm/index.htm

-

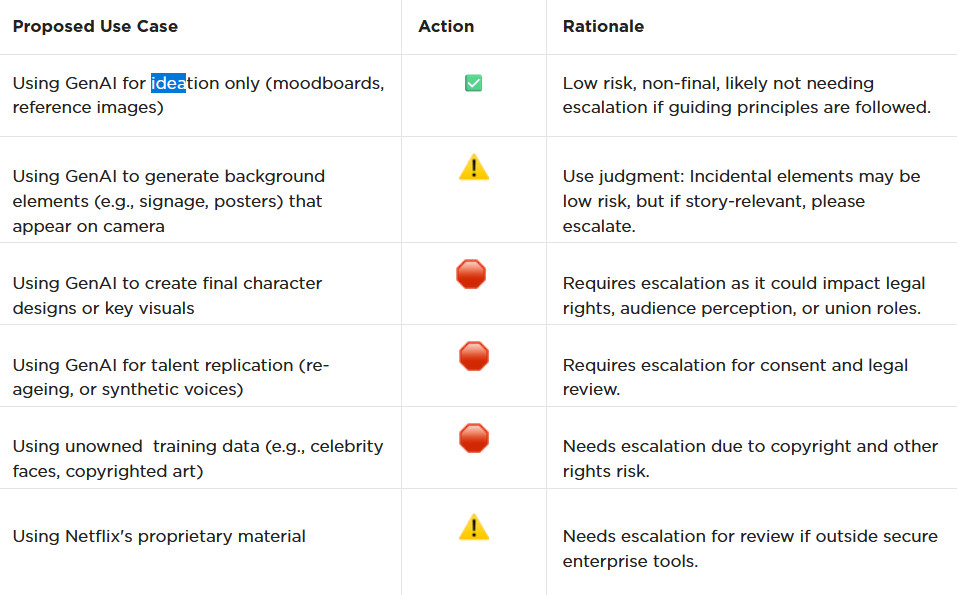

AI and the Law – Netflix : Using Generative AI in Content Production

Read more: AI and the Law – Netflix : Using Generative AI in Content Productionhttps://www.cartoonbrew.com/business/netflix-generative-ai-use-guidelines-253300.html

- Temporary Use: AI-generated material can be used for ideation, visualization, and exploration—but is currently considered temporary and not part of final deliverables.

- Ownership & Rights: All outputs must be carefully reviewed to ensure rights, copyright, and usage are properly cleared before integrating into production.

- Transparency: Productions are expected to document and disclose how generative AI is used.

- Human Oversight: AI tools are meant to support creative teams, not replace them—final decision-making rests with human creators.

- Security & Compliance: Any use of AI tools must align with Netflix’s security protocols and protect confidential production material.

-

George Carlin on divine interventions

Read more: George Carlin on divine interventionsIf God has a divine plan for everything. Why pray to change it…

-

Pantheon of the War – The colossal war painting

Read more: Pantheon of the War – The colossal war paintingFour years in the making with the help of 150 artists, in commemoration of WW1.

edition.cnn.com/style/article/pantheon-de-la-guerre-wwi-painting/index.html

A panoramic canvas measuring 402 feet (122 meters) around and 45 feet (13.7 meters) high. It contained over 5,000 life-size portraits of war heroes, royalty and government officials from the Allies of World War I.

Partial section upload:

-

Finn Jager – From HEIC (High Efficiency Image Container) iPhone to a Multichannel EXR

Read more: Finn Jager – From HEIC (High Efficiency Image Container) iPhone to a Multichannel EXRFinn Jäger has spent some time in making a sleeker tool for all you VFX nerds out there, it takes a HEIC iPhone still and exports a Multichannel EXR – the cool thing is it also converts it to acesCG and it merges the SDR base image with the gain map according to apples math hdr_rgb = sdr_rgb * (1.0 + (headroom – 1.0) * gainmap)

https://github.com/finnschi/heic-shenanigans

COLLECTIONS

| Featured AI

| Design And Composition

| Explore posts

POPULAR SEARCHES

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.