COMPOSITION

-

StudioBinder – Roger Deakins on How to Choose a Camera Lens — Cinematography Composition Techniques

Read more: StudioBinder – Roger Deakins on How to Choose a Camera Lens — Cinematography Composition Techniqueshttps://www.studiobinder.com/blog/camera-lens-buying-guide/

https://www.studiobinder.com/blog/e-books/camera-lenses-explained-volume-1-ebook

-

Composition – cinematography Cheat Sheet

Read more: Composition – cinematography Cheat SheetWhere is our eye attracted first? Why?

Size. Focus. Lighting. Color.

Size. Mr. White (Harvey Keitel) on the right.

Focus. He’s one of the two objects in focus.

Lighting. Mr. White is large and in focus and Mr. Pink (Steve Buscemi) is highlighted by

a shaft of light.

Color. Both are black and white but the read on Mr. White’s shirt now really stands out.

What type of lighting?-> High key lighting.

Features bright, even illumination and few conspicuous shadows. This lighting key is often used in musicals and comedies.Low key lighting

Features diffused shadows and atmospheric pools of light. This lighting key is often used in mysteries and thrillers.High contrast lighting

Features harsh shafts of lights and dramatic streaks of blackness. This type of lighting is often used in tragedies and melodramas.What type of shot?

Extreme long shot

Taken from a great distance, showing much of the locale. Ifpeople are included in these shots, they usually appear as mere specks-> Long shot

Corresponds to the space between the audience and the stage in a live theater. The long shots show the characters and some of the locale.Full shot

Range with just enough space to contain the human body in full. The full shot shows the character and a minimal amount of the locale.Medium shot

Shows the human figure from the knees or waist up.Close-Up

Concentrates on a relatively small object and show very little if any locale.Extreme close-up

Focuses on an unnaturally small portion of an object, giving that part great detail and symbolic significance.What angle?

Bird’s-eye view.

The shot is photographed directly from above. This type of shot can be disorienting, and the people photographed seem insignificant.High angle.

This angle reduces the size of the objects photographed. A person photographed from this angle seems harmless and insignificant, but to a lesser extent than with the bird’s-eye view.-> Eye-level shot.

The clearest view of an object, but seldom intrinsically dramatic, because it tends to be the norm.Low angle.

This angle increases high and a sense of verticality, heightening the importance of the object photographed. A person shot from this angle is given a sense of power and respect.Oblique angle.

For this angle, the camera is tilted laterally, giving the image a slanted appearance. Oblique angles suggest tension, transition, a impending movement. They are also called canted or dutch angles.What is the dominant color?

The use of color in this shot is symbolic. The scene is set in warehouse. Both the set and characters are blues, blacks and whites.

This was intentional allowing for the scenes and shots with blood to have a great level of contrast.

What is the Lens/Filter/Stock?

Telephoto lens.

A lens that draws objects closer but also diminishes the illusion of depth.Wide-angle lens.

A lens that takes in a broad area and increases the illusion of depth but sometimes distorts the edges of the image.Fast film stock.

Highly sensitive to light, it can register an image with little illumination. However, the final product tends to be grainy.Slow film stock.

Relatively insensitive to light, it requires a great deal of illumination. The final product tends to look polished.The lens is not wide-angle because there isn’t a great sense of depth, nor are several planes in focus. The lens is probably long but not necessarily a telephoto lens because the depth isn’t inordinately compressed.

The stock is fast because of the grainy quality of the image.

Subsidiary Contrast; where does the eye go next?

The two guns.

How much visual information is packed into the image? Is the texture stark, moderate, or highly detailed?

Minimalist clutter in the warehouse allows a focus on a character driven thriller.

What is the Composition?

Horizontal.

Compositions based on horizontal lines seem visually at rest and suggest placidity or peacefulness.Vertical.

Compositions based on vertical lines seem visually at rest and suggest strength.-> Diagonal.

Compositions based on diagonal, or oblique, lines seem dynamic and suggest tension or anxiety.-> Binary. Binary structures emphasize parallelism.

Triangle.

Triadic compositions stress the dynamic interplay among three mainCircle.

Circular compositions suggest security and enclosure.Is the form open or closed? Does the image suggest a window that arbitrarily isolates a fragment of the scene? Or a proscenium arch, in which the visual elements are carefully arranged and held in balance?

The most nebulous of all the categories of mise en scene, the type of form is determined by how consciously structured the mise en scene is. Open forms stress apparently simple techniques, because with these unself-conscious methods the filmmaker is able to emphasize the immediate, the familiar, the intimate aspects of reality. In open-form images, the frame tends to be deemphasized. In closed form images, all the necessary information is carefully structured within the confines of the frame. Space seems enclosed and self-contained rather than continuous.

Could argue this is a proscenium arch because this is such a classic shot with parallels and juxtapositions.

Is the framing tight or loose? Do the character have no room to move around, or can they move freely without impediments?

Shots where the characters are placed at the edges of the frame and have little room to move around within the frame are considered tight.

Longer shots, in which characters have room to move around within the frame, are considered loose and tend to suggest freedom.

Center-framed giving us the entire scene showing isolation, place and struggle.

Depth of Field. On how many planes is the image composed (how many are in focus)? Does the background or foreground comment in any way on the mid-ground?

Standard DOF, one background and clearly defined foreground.

Which way do the characters look vis-a-vis the camera?

An actor can be photographed in any of five basic positions, each conveying different psychological overtones.

Full-front (facing the camera):

the position with the most intimacy. The character is looking in our direction, inviting our complicity.Quarter Turn:

the favored position of most filmmakers. This position offers a high degree of intimacy but with less emotional involvement than the full-front.-> Profile (looking of the frame left or right):

More remote than the quarter turn, the character in profile seems unaware of being observed, lost in his or her own thoughts.Three-quarter Turn:

More anonymous than the profile, this position is useful for conveying a character’s unfriendly or antisocial feelings, for in effect, the character is partially turning his or her back on us, rejecting our interest.Back to Camera:

The most anonymous of all positions, this position is often used to suggest a character’s alienation from the world. When a character has his or her back to the camera, we can only guess what’s taking place internally, conveying a sense of concealment, or mystery.How much space is there between the characters?

Extremely close, for a gunfight.

The way people use space can be divided into four proxemic patterns.

Intimate distances.

The intimate distance ranges from skin contact to about eighteen inches away. This is the distance of physical involvement–of love, comfort, and tenderness between individuals.-> Personal distances.

The personal distance ranges roughly from eighteen inches away to about four feet away. These distances tend to be reserved for friends and acquaintances. Personal distances preserve the privacy between individuals, yet these rages don’t necessarily suggest exclusion, as intimate distances often do.Social distances.

The social distance rages from four feet to about twelve feet. These distances are usually reserved for impersonal business and casual social gatherings. It’s a friendly range in most cases, yet somewhat more formal than the personal distance.Public distances.

The public distance extends from twelve feet to twenty-five feet or more. This range tends to be formal and rather detached.

DESIGN

COLOR

-

SecretWeapons MixBox – a practical library for paint-like digital color mixing

Read more: SecretWeapons MixBox – a practical library for paint-like digital color mixingInternally, Mixbox treats colors as real-life pigments using the Kubelka & Munk theory to predict realistic color behavior.

https://scrtwpns.com/mixbox/painter/

https://scrtwpns.com/mixbox.pdf

https://github.com/scrtwpns/mixbox

https://scrtwpns.com/mixbox/docs/

-

Brett Jones / Phil Reyneri (Lightform) / Philipp7pc: The study of Projection Mapping through Projectors

Read more: Brett Jones / Phil Reyneri (Lightform) / Philipp7pc: The study of Projection Mapping through ProjectorsVideo Projection Tool Software

https://hcgilje.wordpress.com/vpt/

https://www.projectorpoint.co.uk/news/how-bright-should-my-projector-be/

http://www.adwindowscreens.com/the_calculator/

heavym

https://heavym.net/en/

MadMapper

https://madmapper.com/ -

Capturing textures albedo

Read more: Capturing textures albedoBuilding a Portable PBR Texture Scanner by Stephane Lb

http://rtgfx.com/pbr-texture-scanner/How To Split Specular And Diffuse In Real Images, by John Hable

http://filmicworlds.com/blog/how-to-split-specular-and-diffuse-in-real-images/Capturing albedo using a Spectralon

https://www.activision.com/cdn/research/Real_World_Measurements_for_Call_of_Duty_Advanced_Warfare.pdfReal_World_Measurements_for_Call_of_Duty_Advanced_Warfare.pdf

Spectralon is a teflon-based pressed powderthat comes closest to being a pure Lambertian diffuse material that reflects 100% of all light. If we take an HDR photograph of the Spectralon alongside the material to be measured, we can derive thediffuse albedo of that material.

The process to capture diffuse reflectance is very similar to the one outlined by Hable.

1. We put a linear polarizing filter in front of the camera lens and a second linear polarizing filterin front of a modeling light or a flash such that the two filters are oriented perpendicular to eachother, i.e. cross polarized.

2. We place Spectralon close to and parallel with the material we are capturing and take brack-eted shots of the setup7. Typically, we’ll take nine photographs, from -4EV to +4EV in 1EVincrements.

3. We convert the bracketed shots to a linear HDR image. We found that many HDR packagesdo not produce an HDR image in which the pixel values are linear. PTGui is an example of apackage which does generate a linear HDR image. At this point, because of the cross polarization,the image is one of surface diffuse response.

4. We open the file in Photoshop and normalize the image by color picking the Spectralon, filling anew layer with that color and setting that layer to “Divide”. This sets the Spectralon to 1 in theimage. All other color values are relative to this so we can consider them as diffuse albedo.

-

HDR and Color

Read more: HDR and Colorhttps://www.soundandvision.com/content/nits-and-bits-hdr-and-color

In HD we often refer to the range of available colors as a color gamut. Such a color gamut is typically plotted on a two-dimensional diagram, called a CIE chart, as shown in at the top of this blog. Each color is characterized by its x/y coordinates.

Good enough for government work, perhaps. But for HDR, with its higher luminance levels and wider color, the gamut becomes three-dimensional.

For HDR the color gamut therefore becomes a characteristic we now call the color volume. It isn’t easy to show color volume on a two-dimensional medium like the printed page or a computer screen, but one method is shown below. As the luminance becomes higher, the picture eventually turns to white. As it becomes darker, it fades to black. The traditional color gamut shown on the CIE chart is simply a slice through this color volume at a selected luminance level, such as 50%.

Three different color volumes—we still refer to them as color gamuts though their third dimension is important—are currently the most significant. The first is BT.709 (sometimes referred to as Rec.709), the color gamut used for pre-UHD/HDR formats, including standard HD.

The largest is known as BT.2020; it encompasses (roughly) the range of colors visible to the human eye (though ET might find it insufficient!).

Between these two is the color gamut used in digital cinema, known as DCI-P3.

sRGB

D65

-

OLED vs QLED – What TV is better?

Read more: OLED vs QLED – What TV is better?Supported by LG, Philips, Panasonic and Sony sell the OLED system TVs.

OLED stands for “organic light emitting diode.”

It is a fundamentally different technology from LCD, the major type of TV today.

OLED is “emissive,” meaning the pixels emit their own light.Samsung is branding its best TVs with a new acronym: “QLED”

QLED (according to Samsung) stands for “quantum dot LED TV.”

It is a variation of the common LED LCD, adding a quantum dot film to the LCD “sandwich.”

QLED, like LCD, is, in its current form, “transmissive” and relies on an LED backlight.OLED is the only technology capable of absolute blacks and extremely bright whites on a per-pixel basis. LCD definitely can’t do that, and even the vaunted, beloved, dearly departed plasma couldn’t do absolute blacks.

QLED, as an improvement over OLED, significantly improves the picture quality. QLED can produce an even wider range of colors than OLED, which says something about this new tech. QLED is also known to produce up to 40% higher luminance efficiency than OLED technology. Further, many tests conclude that QLED is far more efficient in terms of power consumption than its predecessor, OLED.

When analyzing TVs color, it may be beneficial to consider at least 3 elements:

“Color Depth”, “Color Gamut”, and “Dynamic Range”.Color Depth (or “Bit-Depth”, e.g. 8-bit, 10-bit, 12-bit) determines how many distinct color variations (tones/shades) can be viewed on a given display.

Color Gamut (e.g. WCG) determines which specific colors can be displayed from a given “Color Space” (Rec.709, Rec.2020, DCI-P3) (i.e. the color range).

Dynamic Range (SDR, HDR) determines the luminosity range of a specific color – from its darkest shade (or tone) to its brightest.

The overall brightness range of a color will be determined by a display’s “contrast ratio”, that is, the ratio of luminance between the darkest black that can be produced and the brightest white.

Color Volume is the “Color Gamut” + the “Dynamic/Luminosity Range”.

A TV’s Color Volume will not only determine which specific colors can be displayed (the color range) but also that color’s luminosity range, which will have an affect on its “brightness”, and “colorfulness” (intensity and saturation).The better the colour volume in a TV, the closer to life the colours appear.

QLED TV can express nearly all of the colours in the DCI-P3 colour space, and of those colours, express 100% of the colour volume, thereby producing an incredible range of colours.

With OLED TV, when the image is too bright, the percentage of the colours in the colour volume produced by the TV drops significantly. The colours get washed out and can only express around 70% colour volume, making the picture quality drop too.

Note. OLED TV uses organic material, so it may lose colour expression as it ages.

Resources for more reading and comparison below

www.avsforum.com/forum/166-lcd-flat-panel-displays/2812161-what-color-volume.html

www.newtechnologytv.com/qled-vs-oled/

news.samsung.com/za/qled-tv-vs-oled-tv

www.cnet.com/news/qled-vs-oled-samsungs-tv-tech-and-lgs-tv-tech-are-not-the-same/

LIGHTING

-

3D Lighting Tutorial by Amaan Kram

Read more: 3D Lighting Tutorial by Amaan Kramhttp://www.amaanakram.com/lightingT/part1.htm

The goals of lighting in 3D computer graphics are more or less the same as those of real world lighting.

Lighting serves a basic function of bringing out, or pushing back the shapes of objects visible from the camera’s view.

It gives a two-dimensional image on the monitor an illusion of the third dimension-depth.But it does not just stop there. It gives an image its personality, its character. A scene lit in different ways can give a feeling of happiness, of sorrow, of fear etc., and it can do so in dramatic or subtle ways. Along with personality and character, lighting fills a scene with emotion that is directly transmitted to the viewer.

Trying to simulate a real environment in an artificial one can be a daunting task. But even if you make your 3D rendering look absolutely photo-realistic, it doesn’t guarantee that the image carries enough emotion to elicit a “wow” from the people viewing it.

Making 3D renderings photo-realistic can be hard. Putting deep emotions in them can be even harder. However, if you plan out your lighting strategy for the mood and emotion that you want your rendering to express, you make the process easier for yourself.

Each light source can be broken down in to 4 distinct components and analyzed accordingly.

· Intensity

· Direction

· Color

· SizeThe overall thrust of this writing is to produce photo-realistic images by applying good lighting techniques.

-

HDRI Resources

Read more: HDRI ResourcesText2Light

- https://www.cgtrader.com/free-3d-models/exterior/other/10-free-hdr-panoramas-created-with-text2light-zero-shot

- https://frozenburning.github.io/projects/text2light/

- https://github.com/FrozenBurning/Text2Light

Royalty free links

- https://locationtextures.com/panoramas/

- http://www.noahwitchell.com/freebies

- https://polyhaven.com/hdris

- https://hdrmaps.com/

- https://www.ihdri.com/

- https://hdrihaven.com/

- https://www.domeble.com/

- http://www.hdrlabs.com/sibl/archive.html

- https://www.hdri-hub.com/hdrishop/hdri

- http://noemotionhdrs.net/hdrevening.html

- https://www.openfootage.net/hdri-panorama/

- https://www.zwischendrin.com/en/browse/hdri

Nvidia GauGAN360

-

Light and Matter : The 2018 theory of Physically-Based Rendering and Shading by Allegorithmic

Read more: Light and Matter : The 2018 theory of Physically-Based Rendering and Shading by Allegorithmicacademy.substance3d.com/courses/the-pbr-guide-part-1

academy.substance3d.com/courses/the-pbr-guide-part-2

Local copy:

-

Ethan Roffler interviews CG Supervisor Daniele Tosti

Read more: Ethan Roffler interviews CG Supervisor Daniele TostiEthan Roffler

I recently had the honor of interviewing this VFX genius and gained great insight into what it takes to work in the entertainment industry. Keep in mind, these questions are coming from an artist’s perspective but can be applied to any creative individual looking for some wisdom from a professional. So grab a drink, sit back, and enjoy this fun and insightful conversation.

Ethan

To start, I just wanted to say thank you so much for taking the time for this interview!Daniele

My pleasure.

When I started my career I struggled to find help. Even people in the industry at the time were not that helpful. Because of that, I decided very early on that I was going to do exactly the opposite. I spend most of my weekends talking or helping students. ;)Ethan

That’s awesome! I have also come across the same struggle! Just a heads up, this will probably be the most informal interview you’ll ever have haha! Okay, so let’s start with a small introduction!Daniele

Short introduction: I worked very hard and got lucky enough to work on great shows with great people. ;) Slightly longer version: I started working for a TV channel, very early, while I was learning about CG. Slowly made my way across the world, working along very great people and amazing shows. I learned that to be successful in this business, you have to really love what you do as much as respecting the people around you. What you do will improve to the final product; the way you work with people will make a difference in your life.

Ethan

How long have you been an artist?Daniele

Loaded question. I believe I am still trying and craving to be one. After each production I finish I realize how much I still do not know. And how many things I would like to try. I guess in my CG Sup and generalist world, being an artist is about learning as much about the latest technologies and production cycles as I can, then putting that in practice. Having said that, I do consider myself a cinematographer first, as I have been doing that for about 25 years now.Ethan

Words of true wisdom, the more I know the less I know:) How did you get your start in the industry?

How did you break into such a competitive field?Daniele

There were not many schools when I started. It was all about a few magazines, some books, and pushing software around trying to learn how to make pretty images. Opportunities opened because of that knowledge! The true break was learning to work hard to achieve a Suspension of Disbelief in my work that people would recognize as such. It’s not something everyone can do, but I was fortunate to not be scared of working hard, being a quick learner and having very good supervisors and colleagues to learn from.Ethan

Which do you think is better, having a solid art degree or a strong portfolio?Daniele

Very good question. A strong portfolio will get you a job now. A solid strong degree will likely get you a job for a longer period. Let me digress here; Working as an artist is not about being an artist, it’s about making money as an artist. Most people fail to make that difference and have either a poor career or lack the understanding to make a stable one. One should never mix art with working as an artist. You can do both only if you understand business and are fair to yourself.

Ethan

That’s probably the most helpful answer to that question I have ever heard.

What’s some advice you can offer to someone just starting out who wants to break into the industry?Daniele

Breaking in the industry is not just about knowing your art. It’s about knowing good business practices. Prepare a good demo reel based on the skill you are applying for; research all the places where you want to apply and why; send as many reels around; follow up each reel with a phone call. Business is all about right time, right place.Ethan

A follow-up question to that is: Would you consider it a bad practice to send your demo reels out in mass quantity rather than focusing on a handful of companies to research and apply for?Daniele

Depends how desperate you are… I would say research is a must. To improve your options, you need to know which company is working on what and what skills they are after. If you were selling vacuum cleaners you probably would not want to waste energy contacting shoemakers or cattle farmers.Ethan

What do you think the biggest killer of creativity and productivity is for you?Daniele

Money…If you were thinking as an artist. ;) If you were thinking about making money as an artist… then I would say “thinking that you work alone”.Ethan

Best. Answer. Ever.

What are ways you fight complacency and maintain fresh ideas, outlooks, and perspectivesDaniele

Two things: Challenge yourself to go outside your comfort zone. And think outside of the box.Ethan

What are the ways/habits you have that challenge yourself to get out of your comfort zone and think outside the box?Daniele

If you think you are a good character painter, pick up a camera and go take pictures of amazing landscapes. If you think you are good only at painting or sketching, learn how to code in python. If you cannot solve a problem, that being a project or a person, learn to ask for help or learn about looking at the problem from various perspectives. If you are introvert, learn to be extrovert. And vice versa. And so on…

Ethan

How do you avoid burnout?Daniele

Oh… I wish I learned about this earlier. I think anyone that has a passion in something is at risk of burning out. Artists, more than many, because we see the world differently and our passion goes deep. You avoid burnouts by thinking that you are in a long term plan and that you have an obligation to pay or repay your talent by supporting and cherishing yourself and your family, not your paycheck. You do this by treating your art as a business and using business skills when dealing with your career and using artistic skills only when you are dealing with a project itself.Ethan

Looking back, what was a big defining moment for you?Daniele

Recognizing that people around you, those being colleagues, friends or family, come first.

It changed my career overnight.Ethan

Who are some of your personal heroes?Daniele

Too many to list. Most recently… James Cameron; Joe Letteri; Lawrence Krauss; Richard Dawkins. Because they all mix science, art, and poetry in their own way.Ethan

Last question:

What’s your dream job? ;)Daniele

Teaching artists to be better at being business people… as it will help us all improve our lives and the careers we took…

Being a VFX artist is fundamentally based on mistrust.

This because schedules, pipelines, technology, creative calls… all have a native and naive instability to them that causes everyone to grow a genuine but beneficial lack of trust in the status quo. This is a fine balance act to build into your character. The VFX motto: “Love everyone but trust no one” is born on that.

-

Gamma correction

Read more: Gamma correction

http://www.normankoren.com/makingfineprints1A.html#Gammabox

https://en.wikipedia.org/wiki/Gamma_correction

http://www.photoscientia.co.uk/Gamma.htm

https://www.w3.org/Graphics/Color/sRGB.html

http://www.eizoglobal.com/library/basics/lcd_display_gamma/index.html

https://forum.reallusion.com/PrintTopic308094.aspx

Basically, gamma is the relationship between the brightness of a pixel as it appears on the screen, and the numerical value of that pixel. Generally Gamma is just about defining relationships.

Three main types:

– Image Gamma encoded in images

– Display Gammas encoded in hardware and/or viewing time

– System or Viewing Gamma which is the net effect of all gammas when you look back at a final image. In theory this should flatten back to 1.0 gamma.Our eyes, different camera or video recorder devices do not correctly capture luminance. (they are not linear)

Different display devices (monitor, phone screen, TV) do not display luminance correctly neither. So, one needs to correct them, therefore the gamma correction function.The human perception of brightness, under common illumination conditions (not pitch black nor blindingly bright), follows an approximate power function (note: no relation to the gamma function), with greater sensitivity to relative differences between darker tones than between lighter ones, consistent with the Stevens’ power law for brightness perception. If images are not gamma-encoded, they allocate too many bits or too much bandwidth to highlights that humans cannot differentiate, and too few bits or too little bandwidth to shadow values that humans are sensitive to and would require more bits/bandwidth to maintain the same visual quality.

https://blog.amerlux.com/4-things-architects-should-know-about-lumens-vs-perceived-brightness/

cones manage color receptivity, rods determine how large our pupils should be. The larger (more dilated) our pupils are, the more light enters our eyes. In dark situations, our rods dilate our pupils so we can see better. This impacts how we perceive brightness.

https://www.cambridgeincolour.com/tutorials/gamma-correction.htm

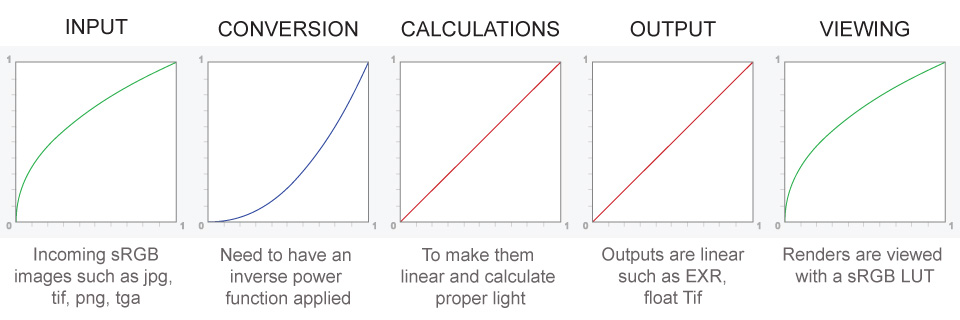

A gamma encoded image has to have “gamma correction” applied when it is viewed — which effectively converts it back into light from the original scene. In other words, the purpose of gamma encoding is for recording the image — not for displaying the image. Fortunately this second step (the “display gamma”) is automatically performed by your monitor and video card. The following diagram illustrates how all of this fits together:

Display gamma

The display gamma can be a little confusing because this term is often used interchangeably with gamma correction, since it corrects for the file gamma. This is the gamma that you are controlling when you perform monitor calibration and adjust your contrast setting. Fortunately, the industry has converged on a standard display gamma of 2.2, so one doesn’t need to worry about the pros/cons of different values.Gamma encoding of images is used to optimize the usage of bits when encoding an image, or bandwidth used to transport an image, by taking advantage of the non-linear manner in which humans perceive light and color. Human response to luminance is also biased. Especially sensible to dark areas.

Thus, the human visual system has a non-linear response to the power of the incoming light, so a fixed increase in power will not have a fixed increase in perceived brightness.

We perceive a value as half bright when it is actually 18% of the original intensity not 50%. As such, our perception is not linear.You probably already know that a pixel can have any ‘value’ of Red, Green, and Blue between 0 and 255, and you would therefore think that a pixel value of 127 would appear as half of the maximum possible brightness, and that a value of 64 would represent one-quarter brightness, and so on. Well, that’s just not the case.

Pixar Color Management

https://renderman.pixar.com/color-management

– Why do we need linear gamma?

Because light works linearly and therefore only works properly when it lights linear values.– Why do we need to view in sRGB?

Because the resulting linear image in not suitable for viewing, but contains all the proper data. Pixar’s IT viewer can compensate by showing the rendered image through a sRGB look up table (LUT), which is identical to what will be the final image after the sRGB gamma curve is applied in post.This would be simple enough if every software would play by the same rules, but they don’t. In fact, the default gamma workflow for many 3D software is incorrect. This is where the knowledge of a proper imaging workflow comes in to save the day.

Cathode-ray tubes have a peculiar relationship between the voltage applied to them, and the amount of light emitted. It isn’t linear, and in fact it follows what’s called by mathematicians and other geeks, a ‘power law’ (a number raised to a power). The numerical value of that power is what we call the gamma of the monitor or system.

Thus. Gamma describes the nonlinear relationship between the pixel levels in your computer and the luminance of your monitor (the light energy it emits) or the reflectance of your prints. The equation is,

Luminance = C * value^gamma + black level

– C is set by the monitor Contrast control.

– Value is the pixel level normalized to a maximum of 1. For an 8 bit monitor with pixel levels 0 – 255, value = (pixel level)/255.

– Black level is set by the (misnamed) monitor Brightness control. The relationship is linear if gamma = 1. The chart illustrates the relationship for gamma = 1, 1.5, 1.8 and 2.2 with C = 1 and black level = 0.

Gamma affects middle tones; it has no effect on black or white. If gamma is set too high, middle tones appear too dark. Conversely, if it’s set too low, middle tones appear too light.

The native gamma of monitors– the relationship between grid voltage and luminance– is typically around 2.5, though it can vary considerably. This is well above any of the display standards, so you must be aware of gamma and correct it.

A display gamma of 2.2 is the de facto standard for the Windows operating system and the Internet-standard sRGB color space.

The old standard for Mcintosh and prepress file interchange is 1.8. It is now 2.2 as well.

Video cameras have gammas of approximately 0.45– the inverse of 2.2. The viewing or system gamma is the product of the gammas of all the devices in the system– the image acquisition device (film+scanner or digital camera), color lookup table (LUT), and monitor. System gamma is typically between 1.1 and 1.5. Viewing flare and other factor make images look flat at system gamma = 1.0.

Most laptop LCD screens are poorly suited for critical image editing because gamma is extremely sensitive to viewing angle.

More about screens

https://www.cambridgeincolour.com/tutorials/gamma-correction.htm

CRT Monitors. Due to an odd bit of engineering luck, the native gamma of a CRT is 2.5 — almost the inverse of our eyes. Values from a gamma-encoded file could therefore be sent straight to the screen and they would automatically be corrected and appear nearly OK. However, a small gamma correction of ~1/1.1 needs to be applied to achieve an overall display gamma of 2.2. This is usually already set by the manufacturer’s default settings, but can also be set during monitor calibration.

LCD Monitors. LCD monitors weren’t so fortunate; ensuring an overall display gamma of 2.2 often requires substantial corrections, and they are also much less consistent than CRT’s. LCDs therefore require something called a look-up table (LUT) in order to ensure that input values are depicted using the intended display gamma (amongst other things). See the tutorial on monitor calibration: look-up tables for more on this topic.

About black level (brightness). Your monitor’s brightness control (which should actually be called black level) can be adjusted using the mostly black pattern on the right side of the chart. This pattern contains two dark gray vertical bars, A and B, which increase in luminance with increasing gamma. (If you can’t see them, your black level is way low.) The left bar (A) should be just above the threshold of visibility opposite your chosen gamma (2.2 or 1.8)– it should be invisible where gamma is lower by about 0.3. The right bar (B) should be distinctly visible: brighter than (A), but still very dark. This chart is only for monitors; it doesn’t work on printed media.

The 1.8 and 2.2 gray patterns at the bottom of the image represent a test of monitor quality and calibration. If your monitor is functioning properly and calibrated to gamma = 2.2 or 1.8, the corresponding pattern will appear smooth neutral gray when viewed from a distance. Any waviness, irregularity, or color banding indicates incorrect monitor calibration or poor performance.

Another test to see whether one’s computer monitor is properly hardware adjusted and can display shadow detail in sRGB images properly, they should see the left half of the circle in the large black square very faintly but the right half should be clearly visible. If not, one can adjust their monitor’s contrast and/or brightness setting. This alters the monitor’s perceived gamma. The image is best viewed against a black background.

This procedure is not suitable for calibrating or print-proofing a monitor. It can be useful for making a monitor display sRGB images approximately correctly, on systems in which profiles are not used (for example, the Firefox browser prior to version 3.0 and many others) or in systems that assume untagged source images are in the sRGB colorspace.

On some operating systems running the X Window System, one can set the gamma correction factor (applied to the existing gamma value) by issuing the command xgamma -gamma 0.9 for setting gamma correction factor to 0.9, and xgamma for querying current value of that factor (the default is 1.0). In OS X systems, the gamma and other related screen calibrations are made through the System Preference

https://www.kinematicsoup.com/news/2016/6/15/gamma-and-linear-space-what-they-are-how-they-differ

Linear color space means that numerical intensity values correspond proportionally to their perceived intensity. This means that the colors can be added and multiplied correctly. A color space without that property is called ”non-linear”. Below is an example where an intensity value is doubled in a linear and a non-linear color space. While the corresponding numerical values in linear space are correct, in the non-linear space (gamma = 0.45, more on this later) we can’t simply double the value to get the correct intensity.

The need for gamma arises for two main reasons: The first is that screens have been built with a non-linear response to intensity. The other is that the human eye can tell the difference between darker shades better than lighter shades. This means that when images are compressed to save space, we want to have greater accuracy for dark intensities at the expense of lighter intensities. Both of these problems are resolved using gamma correction, which is to say the intensity of every pixel in an image is put through a power function. Specifically, gamma is the name given to the power applied to the image.

CRT screens, simply by how they work, apply a gamma of around 2.2, and modern LCD screens are designed to mimic that behavior. A gamma of 2.2, the reciprocal of 0.45, when applied to the brightened images will darken them, leaving the original image.

-

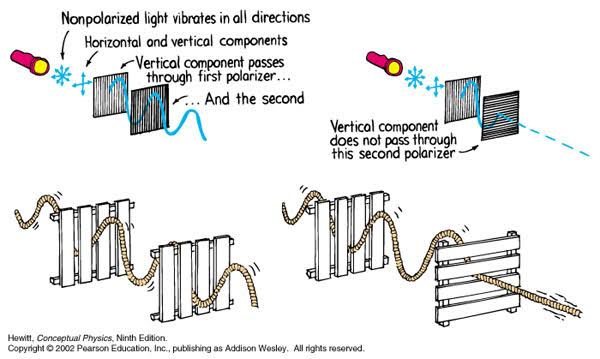

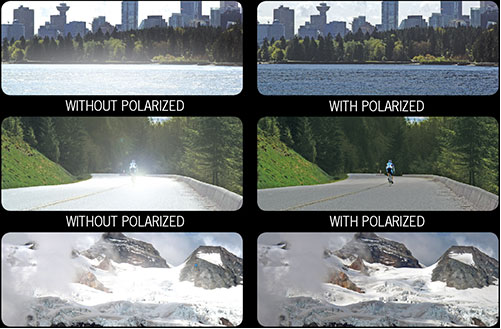

Polarised vs unpolarized filtering

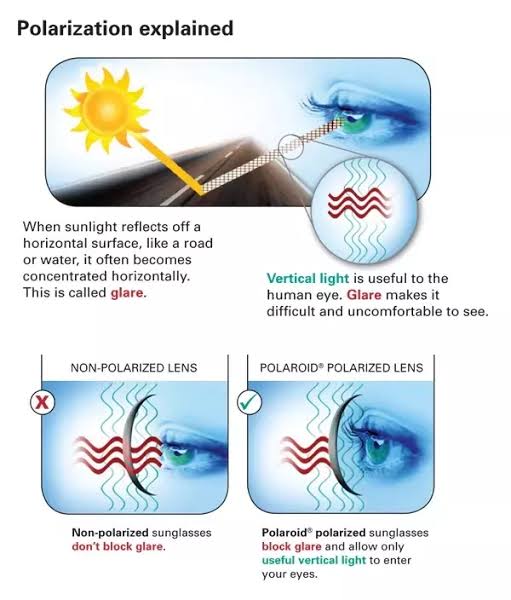

Read more: Polarised vs unpolarized filteringA light wave that is vibrating in more than one plane is referred to as unpolarized light. …

Polarized light waves are light waves in which the vibrations occur in a single plane. The process of transforming unpolarized light into polarized light is known as polarization.

en.wikipedia.org/wiki/Polarizing_filter_(photography)

The most common use of polarized technology is to reduce lighting complexity on the subject.

Details such as glare and hard edges are not removed, but greatly reduced.This method is usually used in VFX to capture raw images with the least amount of specular diffusion or pollution, thus allowing artists to infer detail back through typical shading and rendering techniques and on demand.

Light reflected from a non-metallic surface becomes polarized; this effect is maximum at Brewster’s angle, about 56° from the vertical for common glass.

A polarizer rotated to pass only light polarized in the direction perpendicular to the reflected light will absorb much of it. This absorption allows glare reflected from, for example, a body of water or a road to be reduced. Reflections from shiny surfaces (e.g. vegetation, sweaty skin, water surfaces, glass) are also reduced. This allows the natural color and detail of what is beneath to come through. Reflections from a window into a dark interior can be much reduced, allowing it to be seen through. (The same effects are available for vision by using polarizing sunglasses.)

www.physicsclassroom.com/class/light/u12l1e.cfm

Some of the light coming from the sky is polarized (bees use this phenomenon for navigation). The electrons in the air molecules cause a scattering of sunlight in all directions. This explains why the sky is not dark during the day. But when looked at from the sides, the light emitted from a specific electron is totally polarized.[3] Hence, a picture taken in a direction at 90 degrees from the sun can take advantage of this polarization.

Use of a polarizing filter, in the correct direction, will filter out the polarized component of skylight, darkening the sky; the landscape below it, and clouds, will be less affected, giving a photograph with a darker and more dramatic sky, and emphasizing the clouds.

There are two types of polarizing filters readily available, linear and “circular”, which have exactly the same effect photographically. But the metering and auto-focus sensors in certain cameras, including virtually all auto-focus SLRs, will not work properly with linear polarizers because the beam splitters used to split off the light for focusing and metering are polarization-dependent.

Polarizing filters reduce the light passed through to the film or sensor by about one to three stops (2–8×) depending on how much of the light is polarized at the filter angle selected. Auto-exposure cameras will adjust for this by widening the aperture, lengthening the time the shutter is open, and/or increasing the ASA/ISO speed of the camera.

www.adorama.com/alc/nd-filter-vs-polarizer-what%25e2%2580%2599s-the-difference

Neutral Density (ND) filters help control image exposure by reducing the light that enters the camera so that you can have more control of your depth of field and shutter speed. Polarizers or polarizing filters work in a similar way, but the difference is that they selectively let light waves of a certain polarization pass through. This effect helps create more vivid colors in an image, as well as manage glare and reflections from water surfaces. Both are regarded as some of the best filters for landscape and travel photography as they reduce the dynamic range in high-contrast images, thus enabling photographers to capture more realistic and dramatic sceneries.

shopfelixgray.com/blog/polarized-vs-non-polarized-sunglasses/

www.eyebuydirect.com/blog/difference-polarized-nonpolarized-sunglasses/

-

What light is best to illuminate gems for resale

Read more: What light is best to illuminate gems for resalewww.palagems.com/gem-lighting2

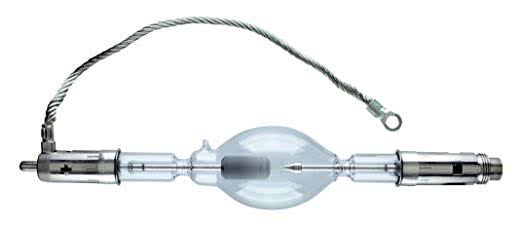

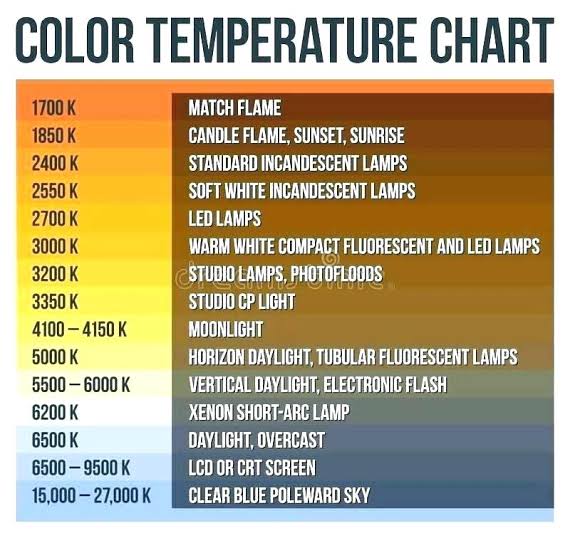

Artificial light sources, not unlike the diverse phases of natural light, vary considerably in their properties. As a result, some lamps render an object’s color better than others do.

The most important criterion for assessing the color-rendering ability of any lamp is its spectral power distribution curve.

Natural daylight varies too much in strength and spectral composition to be taken seriously as a lighting standard for grading and dealing colored stones. For anything to be a standard, it must be constant in its properties, which natural light is not.

For dealers in particular to make the transition from natural light to an artificial light source, that source must offer:

1- A degree of illuminance at least as strong as the common phases of natural daylight.

2- Spectral properties identical or comparable to a phase of natural daylight.A source combining these two things makes gems appear much the same as when viewed under a given phase of natural light. From the viewpoint of many dealers, this corresponds to a naturalappearance.

The 6000° Kelvin xenon short-arc lamp appears closest to meeting the criteria for a standard light source. Besides the strong illuminance this lamp affords, its spectrum is very similar to CIE standard illuminants of similar color temperature.

COLLECTIONS

| Featured AI

| Design And Composition

| Explore posts

POPULAR SEARCHES

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

-

JavaScript how-to free resources

-

Free fonts

-

NVidia – High-Fidelity 3D Mesh Generation at Scale with Meshtron

-

Rec-2020 – TVs new color gamut standard used by Dolby Vision?

-

Matt Gray – How to generate a profitable business

-

What the Boeing 737 MAX’s crashes can teach us about production business – the effects of commoditisation

-

Yann Lecun: Meta AI, Open Source, Limits of LLMs, AGI & the Future of AI | Lex Fridman Podcast #416

-

Image rendering bit depth

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.