COMPOSITION

-

Photography basics: Depth of Field and composition

Read more: Photography basics: Depth of Field and compositionDepth of field is the range within which focusing is resolved in a photo.

Aperture has a huge affect on to the depth of field.Changing the f-stops (f/#) of a lens will change aperture and as such the DOF.

f-stops are a just certain number which is telling you the size of the aperture. That’s how f-stop is related to aperture (and DOF).

If you increase f-stops, it will increase DOF, the area in focus (and decrease the aperture). On the other hand, decreasing the f-stop it will decrease DOF (and increase the aperture).

The red cone in the figure is an angular representation of the resolution of the system. Versus the dotted lines, which indicate the aperture coverage. Where the lines of the two cones intersect defines the total range of the depth of field.

This image explains why the longer the depth of field, the greater the range of clarity.

DESIGN

-

Kristina Kashtanova – “This is how GPT-4 sees and hears itself”

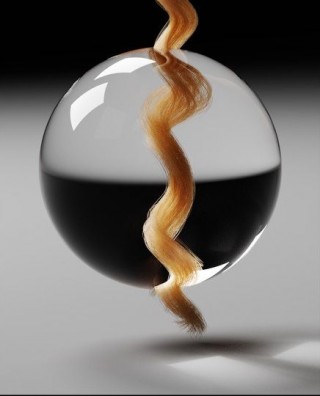

Read more: Kristina Kashtanova – “This is how GPT-4 sees and hears itself”“I used GPT-4 to describe itself. Then I used its description to generate an image, a video based on this image and a soundtrack.

Tools I used: GPT-4, Midjourney, Kaiber AI, Mubert, RunwayML

This is the description I used that GPT-4 had of itself as a prompt to text-to-image, image-to-video, and text-to-music. I put the video and sound together in RunwayML.

GPT-4 described itself as: “Imagine a sleek, metallic sphere with a smooth surface, representing the vast knowledge contained within the model. The sphere emits a soft, pulsating glow that shifts between various colors, symbolizing the dynamic nature of the AI as it processes information and generates responses. The sphere appears to float in a digital environment, surrounded by streams of data and code, reflecting the complex algorithms and computing power behind the AI”

-

Glenn Marshall – The Crow

Read more: Glenn Marshall – The CrowCreated with AI ‘Style Transfer’ processes to transform video footage into AI video art.

COLOR

-

Sensitivity of human eye

Read more: Sensitivity of human eyehttp://www.wikilectures.eu/index.php/Spectral_sensitivity_of_the_human_eye

http://www.normankoren.com/Human_spectral_sensitivity_small.jpg

Spectral sensitivity of eye is influenced by light intensity. And the light intensity determines the level of activity of cones cell and rod cell. This is the main characteristic of human vision. Sensitivity to individual colors, in other words, wavelengths of the light spectrum, is explained by the RGB (red-green-blue) theory. This theory assumed that there are three kinds of cones. It’s selectively sensitive to red (700-630 nm), green (560-500 nm), and blue (490-450 nm) light. And their mutual interaction allow to perceive all colors of the spectrum.

http://weeklysciencequiz.blogspot.com/2013/01/violet-skies-are-for-birds.html

Sensitivity of human eye Sensitivity of human eyes to light increase with the decrease in light intensity. In day-light condition, the cones cell is responding to this condition. And the eye is most sensitive at 555 nm. In darkness condition, the rod cell is responding to this condition. And the eye is most sensitive at 507 nm.

As light intensity decreases, cone function changes more effective way. And when decrease the light intensity, it prompt to accumulation of rhodopsin. Furthermore, in activates rods, it allow to respond to stimuli of light in much lower intensity.

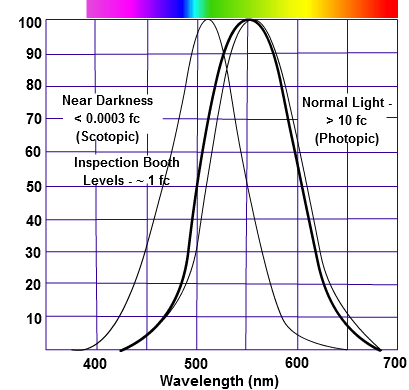

The three curves in the figure above shows the normalized response of an average human eye to various amounts of ambient light. The shift in sensitivity occurs because two types of photoreceptors called cones and rods are responsible for the eye’s response to light. The curve on the right shows the eye’s response under normal lighting conditions and this is called the photopic response. The cones respond to light under these conditions.

As mentioned previously, cones are composed of three different photo pigments that enable color perception. This curve peaks at 555 nanometers, which means that under normal lighting conditions, the eye is most sensitive to a yellowish-green color. When the light levels drop to near total darkness, the response of the eye changes significantly as shown by the scotopic response curve on the left. At this level of light, the rods are most active and the human eye is more sensitive to the light present, and less sensitive to the range of color. Rods are highly sensitive to light but are comprised of a single photo pigment, which accounts for the loss in ability to discriminate color. At this very low light level, sensitivity to blue, violet, and ultraviolet is increased, but sensitivity to yellow and red is reduced. The heavier curve in the middle represents the eye’s response at the ambient light level found in a typical inspection booth. This curve peaks at 550 nanometers, which means the eye is most sensitive to yellowish-green color at this light level. Fluorescent penetrant inspection materials are designed to fluoresce at around 550 nanometers to produce optimal sensitivity under dim lighting conditions.

-

Willem Zwarthoed – Aces gamut in VFX production pdf

Read more: Willem Zwarthoed – Aces gamut in VFX production pdfhttps://www.provideocoalition.com/color-management-part-12-introducing-aces/

Local copy:

https://www.slideshare.net/hpduiker/acescg-a-common-color-encoding-for-visual-effects-applications

-

Weta Digital – Manuka Raytracer and Gazebo GPU renderers – pipeline

Read more: Weta Digital – Manuka Raytracer and Gazebo GPU renderers – pipelinehttps://jo.dreggn.org/home/2018_manuka.pdf

http://www.fxguide.com/featured/manuka-weta-digitals-new-renderer/

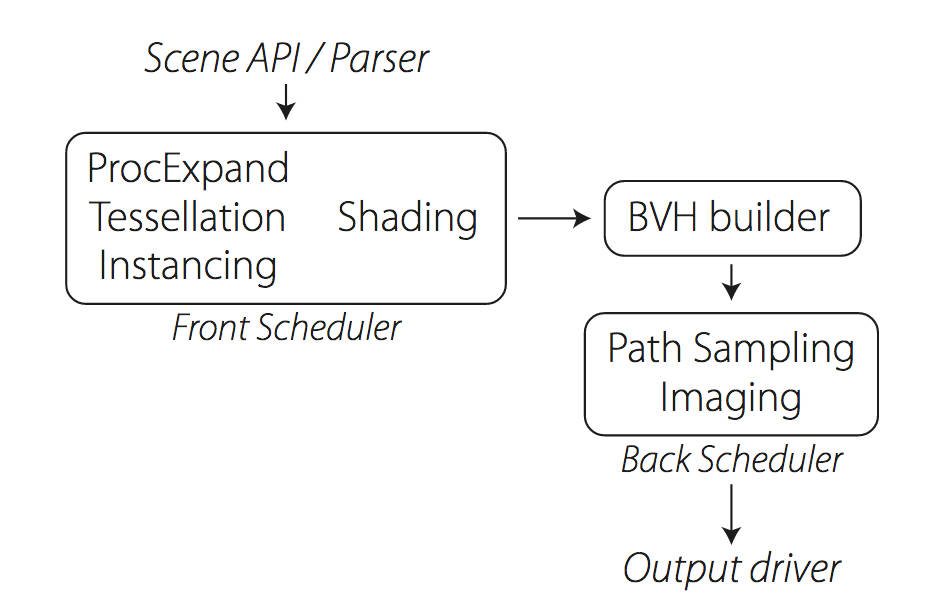

The Manuka rendering architecture has been designed in the spirit of the classic reyes rendering architecture. In its core, reyes is based on stochastic rasterisation of micropolygons, facilitating depth of field, motion blur, high geometric complexity,and programmable shading.

This is commonly achieved with Monte Carlo path tracing, using a paradigm often called shade-on-hit, in which the renderer alternates tracing rays with running shaders on the various ray hits. The shaders take the role of generating the inputs of the local material structure which is then used bypath sampling logic to evaluate contributions and to inform what further rays to cast through the scene.

Over the years, however, the expectations have risen substantially when it comes to image quality. Computing pictures which are indistinguishable from real footage requires accurate simulation of light transport, which is most often performed using some variant of Monte Carlo path tracing. Unfortunately this paradigm requires random memory accesses to the whole scene and does not lend itself well to a rasterisation approach at all.

Manuka is both a uni-directional and bidirectional path tracer and encompasses multiple importance sampling (MIS). Interestingly, and importantly for production character skin work, it is the first major production renderer to incorporate spectral MIS in the form of a new ‘Hero Spectral Sampling’ technique, which was recently published at Eurographics Symposium on Rendering 2014.

Manuka propose a shade-before-hit paradigm in-stead and minimise I/O strain (and some memory costs) on the system, leveraging locality of reference by running pattern generation shaders before we execute light transport simulation by path sampling, “compressing” any bvh structure as needed, and as such also limiting duplication of source data.

The difference with reyes is that instead of baking colors into the geometry like in Reyes, manuka bakes surface closures. This means that light transport is still calculated with path tracing, but all texture lookups etc. are done up-front and baked into the geometry.The main drawback with this method is that geometry has to be tessellated to its highest, stable topology before shading can be evaluated properly. As such, the high cost to first pixel. Even a basic 4 vertices square becomes a much more complex model with this approach.

Manuka use the RenderMan Shading Language (rsl) for programmable shading [Pixar Animation Studios 2015], but we do not invoke rsl shaders when intersecting a ray with a surface (often called shade-on-hit). Instead, we pre-tessellate and pre-shade all the input geometry in the front end of the renderer.

This way, we can efficiently order shading computations to sup-port near-optimal texture locality, vectorisation, and parallelism. This system avoids repeated evaluation of shaders at the same surface point, and presents a minimal amount of memory to be accessed during light transport time. An added benefit is that the acceleration structure for ray tracing (abounding volume hierarchy, bvh) is built once on the final tessellated geometry, which allows us to ray trace more efficiently than multi-level bvhs and avoids costly caching of on-demand tessellated micropolygons and the associated scheduling issues.For the shading reasons above, in terms of AOVs, the studio approach is to succeed at combining complex shading with ray paths in the render rather than pass a multi-pass render to compositing.

For the Spectral Rendering component. The light transport stage is fully spectral, using a continuously sampled wavelength which is traced with each path and used to apply the spectral camera sensitivity of the sensor. This allows for faithfully support any degree of observer metamerism as the camera footage they are intended to match as well as complex materials which require wavelength dependent phenomena such as diffraction, dispersion, interference, iridescence, or chromatic extinction and Rayleigh scattering in participating media.

As opposed to the original reyes paper, we use bilinear interpolation of these bsdf inputs later when evaluating bsdfs per pathv ertex during light transport4. This improves temporal stability of geometry which moves very slowly with respect to the pixel raster

In terms of the pipeline, everything rendered at Weta was already completely interwoven with their deep data pipeline. Manuka very much was written with deep data in mind. Here, Manuka not so much extends the deep capabilities, rather it fully matches the already extremely complex and powerful setup Weta Digital already enjoy with RenderMan. For example, an ape in a scene can be selected, its ID is available and a NUKE artist can then paint in 3D say a hand and part of the way up the neutral posed ape.

We called our system Manuka, as a respectful nod to reyes: we had heard a story froma former ILM employee about how reyes got its name from how fond the early Pixar people were of their lunches at Point Reyes, and decided to name our system after our surrounding natural environment, too. Manuka is a kind of tea tree very common in New Zealand which has very many very small leaves, in analogy to micropolygons ina tree structure for ray tracing. It also happens to be the case that Weta Digital’s main site is on Manuka Street.

LIGHTING

-

Disney’s Moana Island Scene – Free data set

Read more: Disney’s Moana Island Scene – Free data sethttps://www.disneyanimation.com/resources/moana-island-scene/

This data set contains everything necessary to render a version of the Motunui island featured in the 2016 film Moana.

-

Photography basics: Exposure Value vs Photographic Exposure vs Il/Luminance vs Pixel luminance measurements

Read more: Photography basics: Exposure Value vs Photographic Exposure vs Il/Luminance vs Pixel luminance measurementsAlso see: https://www.pixelsham.com/2015/05/16/how-aperture-shutter-speed-and-iso-affect-your-photos/

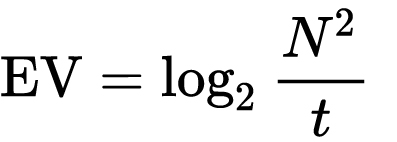

In photography, exposure value (EV) is a number that represents a combination of a camera’s shutter speed and f-number, such that all combinations that yield the same exposure have the same EV (for any fixed scene luminance).

The EV concept was developed in an attempt to simplify choosing among combinations of equivalent camera settings. Although all camera settings with the same EV nominally give the same exposure, they do not necessarily give the same picture. EV is also used to indicate an interval on the photographic exposure scale. 1 EV corresponding to a standard power-of-2 exposure step, commonly referred to as a stop

EV 0 corresponds to an exposure time of 1 sec and a relative aperture of f/1.0. If the EV is known, it can be used to select combinations of exposure time and f-number.

Note EV does not equal to photographic exposure. Photographic Exposure is defined as how much light hits the camera’s sensor. It depends on the camera settings mainly aperture and shutter speed. Exposure value (known as EV) is a number that represents the exposure setting of the camera.

Thus, strictly, EV is not a measure of luminance (indirect or reflected exposure) or illuminance (incidental exposure); rather, an EV corresponds to a luminance (or illuminance) for which a camera with a given ISO speed would use the indicated EV to obtain the nominally correct exposure. Nonetheless, it is common practice among photographic equipment manufacturers to express luminance in EV for ISO 100 speed, as when specifying metering range or autofocus sensitivity.

The exposure depends on two things: how much light gets through the lenses to the camera’s sensor and for how long the sensor is exposed. The former is a function of the aperture value while the latter is a function of the shutter speed. Exposure value is a number that represents this potential amount of light that could hit the sensor. It is important to understand that exposure value is a measure of how exposed the sensor is to light and not a measure of how much light actually hits the sensor. The exposure value is independent of how lit the scene is. For example a pair of aperture value and shutter speed represents the same exposure value both if the camera is used during a very bright day or during a dark night.

Each exposure value number represents all the possible shutter and aperture settings that result in the same exposure. Although the exposure value is the same for different combinations of aperture values and shutter speeds the resulting photo can be very different (the aperture controls the depth of field while shutter speed controls how much motion is captured).

EV 0.0 is defined as the exposure when setting the aperture to f-number 1.0 and the shutter speed to 1 second. All other exposure values are relative to that number. Exposure values are on a base two logarithmic scale. This means that every single step of EV – plus or minus 1 – represents the exposure (actual light that hits the sensor) being halved or doubled.

https://www.streetdirectory.com/travel_guide/141307/photography/exposure_value_ev_and_exposure_compensation.html

Formula

https://en.wikipedia.org/wiki/Exposure_value

https://www.scantips.com/lights/math.html

which means 2EV = N² / t

where

- N is the relative aperture (f-number) Important: Note that f/stop values must first be squared in most calculations

- t is the exposure time (shutter speed) in seconds

EV 0 corresponds to an exposure time of 1 sec and an aperture of f/1.0.

Example: If f/16 and 1/4 second, then this is:

(N² / t) = (16 × 16 ÷ 1/4) = (16 × 16 × 4) = 1024.

Log₂(1024) is EV 10. Meaning, 210 = 1024.

Collecting photographic exposure using Light Meters

The exposure meter in the camera does not know whether the subject itself is bright or not. It simply measures the amount of light that comes in, and makes a guess based on that. The camera will aim for 18% gray, meaning if you take a photo of an entirely white surface, and an entirely black surface you should get two identical images which both are gray (at least in theory)

https://en.wikipedia.org/wiki/Light_meter

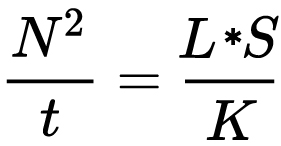

For reflected-light meters, camera settings are related to ISO speed and subject luminance by the reflected-light exposure equation:

where

- N is the relative aperture (f-number)

- t is the exposure time (“shutter speed”) in seconds

- L is the average scene luminance

- S is the ISO arithmetic speed

- K is the reflected-light meter calibration constant

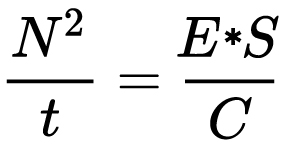

For incident-light meters, camera settings are related to ISO speed and subject illuminance by the incident-light exposure equation:

where

- E is the illuminance (in lux)

- C is the incident-light meter calibration constant

Two values for K are in common use: 12.5 (Canon, Nikon, and Sekonic) and 14 (Minolta, Kenko, and Pentax); the difference between the two values is approximately 1/6 EV.

For C a value of 250 is commonly used.Nonetheless, it is common practice among photographic equipment manufacturers to also express luminance in EV for ISO 100 speed. Using K = 12.5, the relationship between EV at ISO 100 and luminance L is then :

L = 2(EV-3)

The situation with incident-light meters is more complicated than that for reflected-light meters, because the calibration constant C depends on the sensor type. Illuminance is measured with a flat sensor; a typical value for C is 250 with illuminance in lux. Using C = 250, the relationship between EV at ISO 100 and illuminance E is then :

E = 2.5 * 2(EV)

https://nofilmschool.com/2018/03/want-easier-and-faster-way-calculate-exposure-formula

Three basic factors go into the exposure formula itself instead: aperture, shutter, and ISO. Plus a light meter calibration constant.

f-stop²/shutter (in seconds) = lux * ISO/C

If you at least know four of those variables, you’ll be able to calculate the missing value.

So, say you want to figure out how much light you’re going to need in order to shoot at a certain f-stop. Well, all you do is plug in your values (you should know the f-stop, ISO, and your light meter calibration constant) into the formula below:

lux = C (f-stop²/shutter (in seconds))/ISO

Exposure Value Calculator:

https://snapheadshots.com/resources/exposure-and-light-calculator

https://www.scantips.com/lights/exposurecalc.html

https://www.pointsinfocus.com/tools/exposure-settings-ev-calculator/#google_vignette

From that perspective, an exposure stop is a measurement of Exposure and provides a universal linear scale to measure the increase and decrease in light, exposed to the image sensor, due to changes in shutter speed, iso & f-stop.

+-1 stop is a doubling or halving of the amount of light let in when taking a photo.

1 EV is just another way to say one stop of exposure change.One major use of EV (Exposure Value) is just to measure any change of exposure, where one EV implies a change of one stop of exposure. Like when we compensate our picture in the camera.

If the picture comes out too dark, our manual exposure could correct the next one by directly adjusting one of the three exposure controls (f/stop, shutter speed, or ISO). Or if using camera automation, the camera meter is controlling it, but we might apply +1 EV exposure compensation (or +1 EV flash compensation) to make the result goal brighter, as desired. This use of 1 EV is just another way to say one stop of exposure change.

On a perfect day the difference from sampling the sky vs the sun exposure with diffusing spot meters is about 3.2 exposure difference.

~15.4 EV for the sun ~12.2 EV for the sky

That is as a ballpark. All still influenced by surroundings, accuracy parameters, fov of the sensor…

EV calculator

https://www.scantips.com/lights/evchart.html#calc

http://www.fredparker.com/ultexp1.htm

Exposure value is basically used to indicate an interval on the photographic exposure scale, with a difference of 1 EV corresponding to a standard power-of-2 exposure step, also commonly referred to as a “stop”.

https://contrastly.com/a-guide-to-understanding-exposure-value-ev/

Retrieving photographic exposure from an image

All you can hope to measure with your camera and some images is the relative reflected luminance. Even if you have the camera settings. https://en.wikipedia.org/wiki/Relative_luminance

If you REALLY want to know the amount of light in absolute radiometric units, you’re going to need to use some kind of absolute light meter or measured light source to calibrate your camera. For references on how to do this, see: Section 2.5 Obtaining Absolute Radiance from http://www.pauldebevec.com/Research/HDR/debevec-siggraph97.pdf

IF you are still trying to gauge relative brightness, the level of the sun in Nuke can vary, but it should be in the thousands. Ie: between 30,000 and 65,0000 rgb value depending on time of the day, season and atmospherics.

The values for a 12 o’clock sun, with the sun sampled at EV 15.5 (shutter 1/30, ISO 100, F22) is 32.000 RGB max values (or 32,000 pixel luminance).

The thing to keep an eye for is the level of contrast between sunny side/fill side. The terminator should be quite obvious, there can be up to 3 stops difference between fill/key in sunny lit objects.Note: In Foundry’s Nuke, the software will map 18% gray to whatever your center f/stop is set to in the viewer settings (f/8 by default… change that to EV by following the instructions below).

You can experiment with this by attaching an Exposure node to a Constant set to 0.18, setting your viewer read-out to Spotmeter, and adjusting the stops in the node up and down. You will see that a full stop up or down will give you the respective next value on the aperture scale (f8, f11, f16 etc.).

One stop doubles or halves the amount or light that hits the filmback/ccd, so everything works in powers of 2.

So starting with 0.18 in your constant, you will see that raising it by a stop will give you .36 as a floating point number (in linear space), while your f/stop will be f/11 and so on.If you set your center stop to 0 (see below) you will get a relative readout in EVs, where EV 0 again equals 18% constant gray.

Note: make sure to set your Nuke read node to ‘raw data’In other words. Setting the center f-stop to 0 means that in a neutral plate, the middle gray in the macbeth chart will equal to exposure value 0. EV 0 corresponds to an exposure time of 1 sec and an aperture of f/1.0.

To switch Foundry’s Nuke’s SpotMeter to return the EV of an image, click on the main viewport, and then press s, this opens the viewer’s properties. Now set the center f-stop to 0 in there. And the SpotMeter in the viewport will change from aperture and fstops to EV.

If you are trying to gauge the EV from the pixel luminance in the image:

– Setting the center f-stop to 0 means that in a neutral plate, the middle 18% gray will equal to exposure value 0.

– So if EV 0 = 0.18 middle gray in nuke which equal to a pixel luminance of 0.18, doubling that value, doubles the EV..18 pixel luminance = 0EV .36 pixel luminance = 1EV .72 pixel luminance = 2EV 1.46 pixel luminance = 3EV ...

This is a Geometric Progression function: xn = ar(n-1)

The most basic example of this function is 1,2,4,8,16,32,… The sequence starts at 1 and doubles each time, so

- a=1 (the first term)

- r=2 (the “common ratio” between terms is a doubling)

And we get:

{a, ar, ar2, ar3, … }

= {1, 1×2, 1×22, 1×23, … }

= {1, 2, 4, 8, … }

In this example the function translates to: n = 2(n-1)

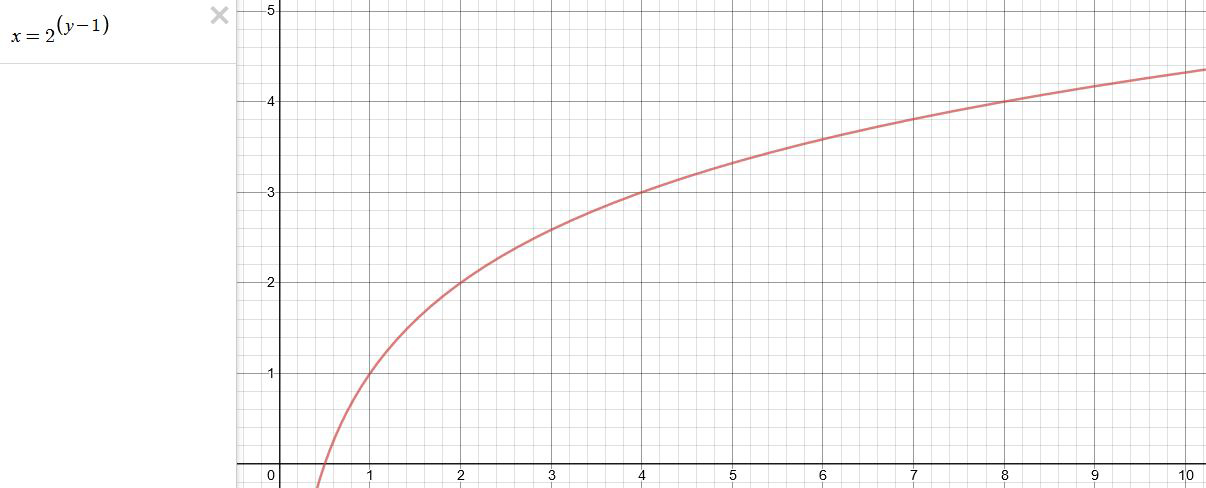

You can graph this curve through this expression: x = 2(y-1) :

You can go back and forth between the two values through a geometric progression function and a log function:

(Note: in a spreadsheet this is: = POWER(2; cell# -1) and =LOG(cell#, 2)+1) )

2(y-1) log2(x)+1 x y 1 1 2 2 4 3 8 4 16 5 32 6 64 7 128 8 256 9 512 10 1024 11 2048 12 4096 13 Translating this into a geometric progression between an image pixel luminance and EV:

Collections

| Explore posts

| Design And Composition

| Featured AI

Popular Searches

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.