creativetacos.com/category/fonts/script-fonts/

3Dprinting (185) A.I. (926) animation (356) blender (224) colour (241) commercials (53) composition (154) cool (375) design (661) Featured (94) hardware (319) IOS (109) jokes (141) lighting (302) modeling (160) music (189) photogrammetry (199) photography (758) production (1311) python (108) quotes (501) reference (318) software (1385) trailers (311) ves (579) VR (221)

POPULAR SEARCHES unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

StratusCore will provide the following to VES members:

– 40 hours of Virtual Workstation use per month

– 25 render credits per month

– 50 GB of hot storage

– 50% off all purchases

www.ibc.org/create-and-produce/re-animators-night-of-the-living-avatars/5504.article

“When your performance is captured as data it can be manipulated, reworked or sampled, much like the music industry samples vocals and beats. If we can do that then where does the intellectual property lie? Who owns authorship of the performance? Where are the boundaries?”

“Tracking use of an original data captured performance is tricky given that any character or creature you can imagine can be animated using the artist’s work as a base.”

“Conventionally, when an actor contracts with a studio they will assign rights to their performance in that production to the studio. Typically, that would also licence the producer to use the actor’s likeness in related uses, such as marketing materials, or video games.

Similarly, a digital avatar will be owned by the commissioners of the work who will buy out the actor’s performance for that role and ultimately own the IP.

However, in UK law there is no such thing as an ‘image right’ or ‘personality right’ because there is no legal process in the UK which protects the Intellectual Property Rights that identify an image or personality.

The only way in which a pure image right can be protected in the UK is under the Law of Passing-Off.”

“Whether a certain project is ethical or not depends mainly on the purpose of using the ‘face’ of the dead actor,” “Legally, when an actor dies, the rights of their [image/name/brand] are controlled through their estate, which is often managed by family members. This can mean that different people have contradictory ideas about what is and what isn’t appropriate.”

“The advance of performance capture and VFX techniques can be liberating for much of the acting community. In theory, they would be cast on talent alone, rather than defined by how they look.”

“The question is whether that is ethically right.”

getwrightonit.com/animation-price-guide/

“Estimate the cost of animation projects for different mediums, styles, quality and duration using our interactive instant animation price calculator. Use this price guide to calculate a ballpark figure for your next animation project.”

getwrightonit.com/animation-cost-per-minute-inflation-adjusted/

“The cost per minute to produce the traditionally animated films from the 1930s – 1960 was much lower than today even when adjusted for inflation. This is likely due to low paid animators pulling excessive unpaid overtime, including an army of women in the Ink and Paint department who barely made enough money to cover the rent.”

“Overall, animation is a high cost and labor intensive way to get a story to the screen, but there are big returns to be made, particularly with re-releases as a new generation of young audience members discover the films.”

https://www.cg-wire.com/en/kitsu

Kitsu is a web application to track the progress of your productions. It improves the communication between all stakeholders of the production. Which leads to better pictures and faster deliveries.

CGWire PRESS RELEASE

“We noticed that a good way to improve the quality of CG movies is to improve the communication inside the studio. That’s why we made a software that is easy to use. All the stakeholders of the production can add and get data efficiently. Everyone is better informed and take better decisions.

The most notable features of Kitsu are:

– The listing of all elements of the production: assets, shots and tasks.

– A powerful commenting system that allows to put notes on tasks while changing status and attaching previews.

– A playlist system to view, compare, annotate and comment shots in a row. It’s super easy for the director to perform his reviews.

– A news feed to know in real-time what is happening during the production.

– Quota tables to evaluate the productiviy of the studio.

Aside of that we added other tools to simplify the daily usage : timesheets, scheduling, production statistics, Slack integration and casting management.

Kitsu Today CGWire is deployed in 25 studios. Most of them are split in different locations. So, our users are spread in more than 15 countries working on production of all kinds: TV series, feature films and short movies (our customers are Cube Creative, TNZPV, Miyu, Akami, Lee Film, etc.). Once shipped, all productions tracked with Kitsu met success by receiving awards or getting millions of views on Youtube or on TV.

Another good thing is that Animation Schools really enjoy our product, 10 of them are using Kitsu to manage their end of studies projects (Les Gobelins, Ecole des Nouvelles Images, LISAA, etc.).

Our goal in 2020 is to make the ingestion process even better with a stronger import system, software integration and production templates. With these features, we want to be the reference software for building animation productions, especially for TV series.”

![]()

![]()

![]()

![]()

Concierge Render allows you to render animations in parallel on up to 64 nodes, harnessing the power of over 500 GPUs per job at prices as low as $0.35 per GPU per hour. Eeve’s at $2 per server per hour, up to 48 servers per hour.

With over 40,000 GPUs available, Concierge Render can meet most projects’ size and deadlines.

All frames are processed simultaneously. Up to 520 GPUs will process each project with unprecedented speed. Still images are processed on multi-GPU servers and animations are rendered over a proprietary distributed GPU network.

Concierge Render offers a system with zero queue so a project starts rendering immediately.

en.wikipedia.org/wiki/Go_motion

Petroleum jelly

This crude but reasonably effective technique involves smearing petroleum jelly (“Vaseline”) on a plate of glass in front of the camera lens, also known as vaselensing, then cleaning and reapplying it after each shot — a time-consuming process, but one which creates a blur around the model. This technique was used for the endoskeleton in The Terminator. This process was also employed by Jim Danforth to blur the pterodactyl’s wings in Hammer Films’ When Dinosaurs Ruled the Earth, and by Randal William Cook on the terror dogs sequence in Ghostbusters.[citation needed]

Bumping the puppet

Gently bumping or flicking the puppet before taking the frame will produce a slight blur; however, care must be taken when doing this that the puppet does not move too much or that one does not bump or move props or set pieces.

Moving the table

Moving the table on which the model is standing while the film is being exposed creates a slight, realistic blur. This technique was developed by Ladislas Starevich: when the characters ran, he moved the set in the opposite direction. This is seen in The Little Parade when the ballerina is chased by the devil. Starevich also used this technique on his films The Eyes of the Dragon, The Magical Clock and The Mascot. Aardman Animations used this for the train chase in The Wrong Trousers and again during the lorry chase in A Close Shave. In both cases the cameras were moved physically during a 1-2 second exposure. The technique was revived for the full-length Wallace & Gromit: The Curse of the Were-Rabbit.

Go motion

The most sophisticated technique was originally developed for the film The Empire Strikes Back and used for some shots of the tauntauns and was later used on films like Dragonslayer and is quite different from traditional stop motion. The model is essentially a rod puppet. The rods are attached to motors which are linked to a computer that can record the movements as the model is traditionally animated. When enough movements have been made, the model is reset to its original position, the camera rolls and the model is moved across the table. Because the model is moving during shots, motion blur is created.

A variation of go motion was used in E.T. the Extra-Terrestrial to partially animate the children on their bicycles.

Real-World Measurements for Call of Duty: Advanced Warfare

www.activision.com/cdn/research/Real_World_Measurements_for_Call_of_Duty_Advanced_Warfare.pdf

Local version

Real_World_Measurements_for_Call_of_Duty_Advanced_Warfare.pdf

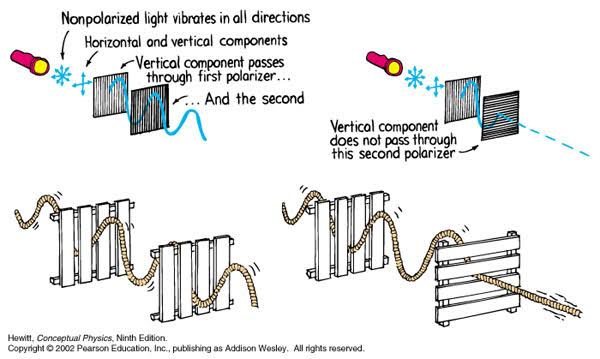

A light wave that is vibrating in more than one plane is referred to as unpolarized light. …

Polarized light waves are light waves in which the vibrations occur in a single plane. The process of transforming unpolarized light into polarized light is known as polarization.

en.wikipedia.org/wiki/Polarizing_filter_(photography)

The most common use of polarized technology is to reduce lighting complexity on the subject.

Details such as glare and hard edges are not removed, but greatly reduced.

Building a Portable PBR Texture Scanner by Stephane Lb

http://rtgfx.com/pbr-texture-scanner/

How To Split Specular And Diffuse In Real Images, by John Hable

http://filmicworlds.com/blog/how-to-split-specular-and-diffuse-in-real-images/

Capturing albedo using a Spectralon

https://www.activision.com/cdn/research/Real_World_Measurements_for_Call_of_Duty_Advanced_Warfare.pdf

Real_World_Measurements_for_Call_of_Duty_Advanced_Warfare.pdf

Spectralon is a teflon-based pressed powderthat comes closest to being a pure Lambertian diffuse material that reflects 100% of all light. If we take an HDR photograph of the Spectralon alongside the material to be measured, we can derive thediffuse albedo of that material.

The process to capture diffuse reflectance is very similar to the one outlined by Hable.

1. We put a linear polarizing filter in front of the camera lens and a second linear polarizing filterin front of a modeling light or a flash such that the two filters are oriented perpendicular to eachother, i.e. cross polarized.

2. We place Spectralon close to and parallel with the material we are capturing and take brack-eted shots of the setup7. Typically, we’ll take nine photographs, from -4EV to +4EV in 1EVincrements.

3. We convert the bracketed shots to a linear HDR image. We found that many HDR packagesdo not produce an HDR image in which the pixel values are linear. PTGui is an example of apackage which does generate a linear HDR image. At this point, because of the cross polarization,the image is one of surface diffuse response.

4. We open the file in Photoshop and normalize the image by color picking the Spectralon, filling anew layer with that color and setting that layer to “Divide”. This sets the Spectralon to 1 in theimage. All other color values are relative to this so we can consider them as diffuse albedo.

amasci.com/amateur/holohint.html#0

Each surface scratch acts as a bent mirror and reflects sunlight. Each reflection looks like a small white highlight on the shiny scratch. Each of your eyes sees a DIFFERENT REFLECTION. Your brain thinks the two different reflections are really one white dot located deep behind the scratch. It’s like a “viewmaster” stereo viewer.

MaterialX is an open standard for transfer of rich material and look-development content between applications and renderers.

Originated at Lucasfilm in 2012, MaterialX has been used by Industrial Light & Magic in feature films such as Star Wars: The Force Awakens and Rogue One: A Star Wars Story, and by ILMxLAB in real-time experiences such as Trials On Tatooine.

MaterialX addresses the need for a common, open standard to represent the data values and relationships required to transfer the complete look of a computer graphics model from one application or rendering platform to another, including shading networks, patterns and texturing, complex nested materials and geometric assignments.

To further encourage interchangeable CG look setups, MaterialX also defines a complete set of data creation and processing nodes with a precise mechanism for functional extensibility.

blogs.nvidia.com/blog/2019/03/18/omniverse-collaboration-platform/

developer.nvidia.com/nvidia-omniverse

An open, Interactive 3D Design Collaboration Platform for Multi-Tool Workflows to simplify studio workflows for real-time graphics.

It supports Pixar’s Universal Scene Description technology for exchanging information about modeling, shading, animation, lighting, visual effects and rendering across multiple applications.

It also supports NVIDIA’s Material Definition Language, which allows artists to exchange information about surface materials across multiple tools.

With Omniverse, artists can see live updates made by other artists working in different applications. They can also see changes reflected in multiple tools at the same time.

For example an artist using Maya with a portal to Omniverse can collaborate with another artist using UE4 and both will see live updates of each others’ changes in their application.

www.nvidia.com/en-us/design-visualization/technologies/material-definition-language/

THE NVIDIA MATERIAL DEFINITION LANGUAGE (MDL) gives you the freedom to share physically based materials and lights between supporting applications.

For example, create an MDL material in an application like Allegorithmic Substance Designer, save it to your library, then use it in NVIDIA® Iray® or Chaos Group’s V-Ray, or any other supporting application.

Unlike a shading language that produces programs for a particular renderer, MDL materials define the behavior of light at a high level. Different renderers and tools interpret the light behavior and create the best possible image.

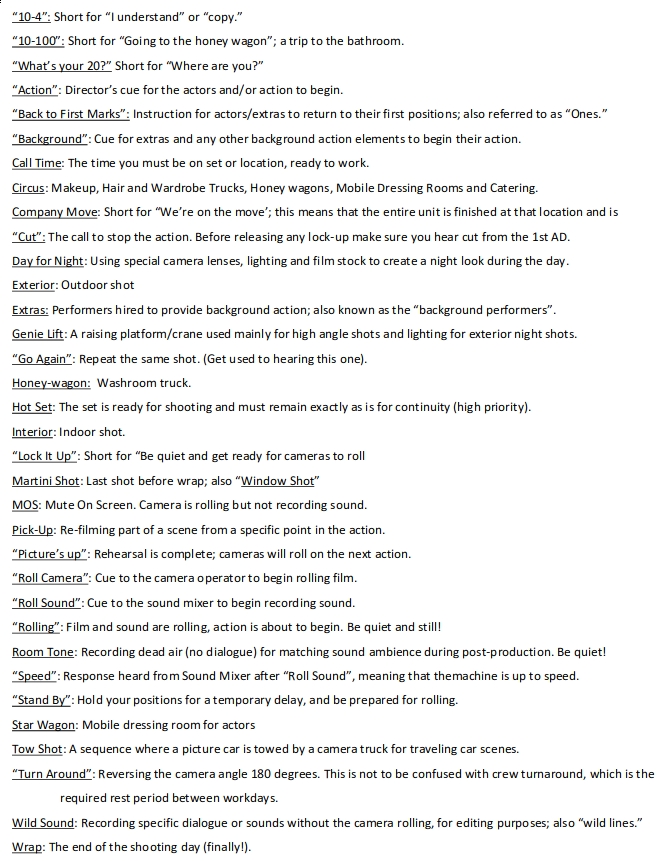

www.ubcp.com/wp-content/uploads/Terminology-on-Film-Sets.pdf

TERMINOLOGY USED on FILM SETS

“10-4”: Short for “I understand” or “copy.”

“10-100”: Short for “Going to the honey wagon”; a trip to the bathroom.

“What’s your 20?” Short for “Where are you?”

“Action”: Director’s cue for the actors and/or action to begin.

“Back to First Marks”: Instruction for actors/extras to return to their first positions; also referred to as “Ones.”

“Background”: Cue for extras and any other background action elements to begin their action.

Call Time: The time you must be on set or location, ready to work.

Circus: Makeup, Hair and Wardrobe Trucks, Honey wagons, Mobile Dressing Rooms and Catering.

Company Move: Short for “We’re on the move’; this means that the entire unit is finished at that location and is

“Cut”: The call to stop the action. Before releasing any lock-up make sure you hear cut from the 1st AD.

Day for Night: Using special camera lenses, lighting and film stock to create a night look during the day.

Exterior: Outdoor shot

Extras: Performers hired to provide background action; also known as the “background performers”.

Genie Lift: A raising platform/crane used mainly for high angle shots and lighting for exterior night shots.

“Go Again”: Repeat the same shot. (Get used to hearing this one).

Honey-wagon: Washroom truck.

Hot Set: The set is ready for shooting and must remain exactly as is for continuity (high priority).

Interior: Indoor shot.

“Lock It Up”: Short for “Be quiet and get ready for cameras to roll

Martini Shot: Last shot before wrap; also “Window Shot”

MOS: Mute On Screen. Camera is rolling but not recording sound.

Pick-Up: Re-filming part of a scene from a specific point in the action.

“Picture’s up”: Rehearsal is complete; cameras will roll on the next action.

“Roll Camera”: Cue to the camera operator to begin rolling film.

“Roll Sound”: Cue to the sound mixer to begin recording sound.

“Rolling”: Film and sound are rolling, action is about to begin. Be quiet and still!

Room Tone: Recording dead air (no dialogue) for matching sound ambience during post-production. Be quiet!

“Speed”: Response heard from Sound Mixer after “Roll Sound”, meaning that themachine is up to speed.

“Stand By”: Hold your positions for a temporary delay, and be prepared for rolling.

Star Wagon: Mobile dressing room for actors

Tow Shot: A sequence where a picture car is towed by a camera truck for traveling car scenes.

“Turn Around”: Reversing the camera angle 180 degrees. This is not to be confused with crew turnaround, which is the

required rest period between workdays.

Wild Sound: Recording specific dialogue or sounds without the camera rolling, for editing purposes; also “wild lines.”

Wrap: The end of the shooting day (finally!).

Also see:

https://www.pixelsham.com/2018/11/22/exposure-value-measurements/

https://www.pixelsham.com/2016/03/03/f-stop-vs-t-stop/

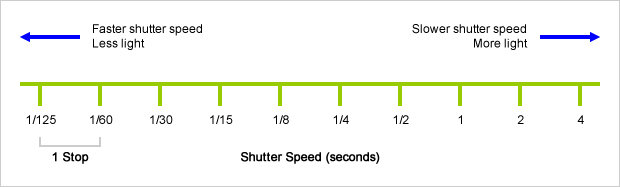

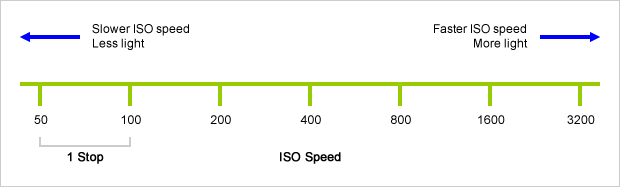

An exposure stop is a unit measurement of Exposure as such it provides a universal linear scale to measure the increase and decrease in light, exposed to the image sensor, due to changes in shutter speed, iso and f-stop.

+-1 stop is a doubling or halving of the amount of light let in when taking a photo

1 EV (exposure value) is just another way to say one stop of exposure change.

https://www.photographymad.com/pages/view/what-is-a-stop-of-exposure-in-photography

Same applies to shutter speed, iso and aperture.

Doubling or halving your shutter speed produces an increase or decrease of 1 stop of exposure.

Doubling or halving your iso speed produces an increase or decrease of 1 stop of exposure.

nerdist.com/article/joe-letteri-avatar-alita-battle-angel-james-cameron-martin-scorsese/

[Any] story [has to be] complete in itself. If there are gaps that you’re hoping will be filled in with visual effects, you’re likely to be disappointed. We can add ideas, we can help in whatever way that we can, but you want to make sure that when you read it, it reads well.

[Our responsibility as VFX artist] I think first and foremost [is] to engage the audience. Everything that we do has to be part of the audience wanting to sit there and watch that movie and see what happens next. And it’s a combination of things. It’s the drama of the characters. It’s maybe what you can do to a scene to make it compelling to look at, the realism that you might need to get people drawn into that moment. It could be any number of things, but it’s really about just making sure that you’re always in mind of how the audience is experiencing what they’re seeing.

COLLECTIONS

| Featured AI

| Design And Composition

| Explore posts

POPULAR SEARCHES

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.