Views :

881

3Dprinting (172) A.I. (660) animation (334) blender (194) colour (225) commercials (46) composition (150) cool (358) design (627) Featured (65) hardware (302) IOS (109) jokes (134) lighting (278) modeling (116) music (183) photogrammetry (171) photography (744) production (1233) python (84) quotes (485) reference (305) software (1319) trailers (295) ves (522) VR (219)

Author: pIXELsHAM.com

-

The Mill opens new offices in Berlin

www.themill.com/stories/the-mill-opens-new-studio-in-berlin/

Greg Spencer will lead a multi-disciplinary team of artists in his role of Creative Director.

Justin Stiebel will be continuing his role as Executive Producer at The Mill Berlin and will be managing client relationships as well as all new business enquiries.

-

Use a USB keychain as a scratch disk on Photoshop

Use this at your own risk. ;)

The key is to change the USB from a “USB drive” to “local disk” type.Steps:

woshub.com/removable-usb-flash-drive-as-local-disk-in-windows-7/To force the driver update:

appuals.com/how-to-fix-the-third-party-inf-doesnt-contain-digital-signature-information/Zip file attached to this post.

-

Methods for creating motion blur in Stop motion

en.wikipedia.org/wiki/Go_motion

Petroleum jelly

This crude but reasonably effective technique involves smearing petroleum jelly (“Vaseline”) on a plate of glass in front of the camera lens, also known as vaselensing, then cleaning and reapplying it after each shot — a time-consuming process, but one which creates a blur around the model. This technique was used for the endoskeleton in The Terminator. This process was also employed by Jim Danforth to blur the pterodactyl’s wings in Hammer Films’ When Dinosaurs Ruled the Earth, and by Randal William Cook on the terror dogs sequence in Ghostbusters.[citation needed]Bumping the puppet

Gently bumping or flicking the puppet before taking the frame will produce a slight blur; however, care must be taken when doing this that the puppet does not move too much or that one does not bump or move props or set pieces.Moving the table

Moving the table on which the model is standing while the film is being exposed creates a slight, realistic blur. This technique was developed by Ladislas Starevich: when the characters ran, he moved the set in the opposite direction. This is seen in The Little Parade when the ballerina is chased by the devil. Starevich also used this technique on his films The Eyes of the Dragon, The Magical Clock and The Mascot. Aardman Animations used this for the train chase in The Wrong Trousers and again during the lorry chase in A Close Shave. In both cases the cameras were moved physically during a 1-2 second exposure. The technique was revived for the full-length Wallace & Gromit: The Curse of the Were-Rabbit.Go motion

The most sophisticated technique was originally developed for the film The Empire Strikes Back and used for some shots of the tauntauns and was later used on films like Dragonslayer and is quite different from traditional stop motion. The model is essentially a rod puppet. The rods are attached to motors which are linked to a computer that can record the movements as the model is traditionally animated. When enough movements have been made, the model is reset to its original position, the camera rolls and the model is moved across the table. Because the model is moving during shots, motion blur is created.A variation of go motion was used in E.T. the Extra-Terrestrial to partially animate the children on their bicycles.

-

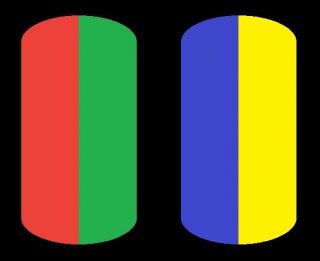

The Forbidden colors – Red-Green & Blue-Yellow: The Stunning Colors You Can’t See

www.livescience.com/17948-red-green-blue-yellow-stunning-colors.html

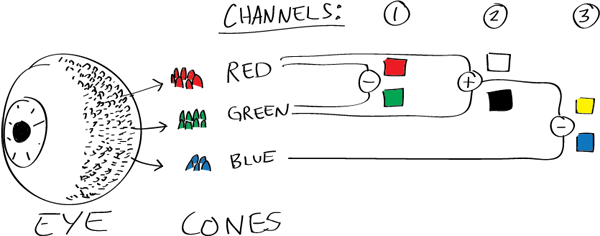

While the human eye has red, green, and blue-sensing cones, those cones are cross-wired in the retina to produce a luminance channel plus a red-green and a blue-yellow channel, and it’s data in that color space (known technically as “LAB”) that goes to the brain. That’s why we can’t perceive a reddish-green or a yellowish-blue, whereas such colors can be represented in the RGB color space used by digital cameras.

https://en.rockcontent.com/blog/the-use-of-yellow-in-data-design

The back of the retina is covered in light-sensitive neurons known as cone cells and rod cells. There are three types of cone cells, each sensitive to different ranges of light. These ranges overlap, but for convenience the cones are referred to as blue (short-wavelength), green (medium-wavelength), and red (long-wavelength). The rod cells are primarily used in low-light situations, so we’ll ignore those for now.

When light enters the eye and hits the cone cells, the cones get excited and send signals to the brain through the visual cortex. Different wavelengths of light excite different combinations of cones to varying levels, which generates our perception of color. You can see that the red cones are most sensitive to light, and the blue cones are least sensitive. The sensitivity of green and red cones overlaps for most of the visible spectrum.

Here’s how your brain takes the signals of light intensity from the cones and turns it into color information. To see red or green, your brain finds the difference between the levels of excitement in your red and green cones. This is the red-green channel.

To get “brightness,” your brain combines the excitement of your red and green cones. This creates the luminance, or black-white, channel. To see yellow or blue, your brain then finds the difference between this luminance signal and the excitement of your blue cones. This is the yellow-blue channel.

From the calculations made in the brain along those three channels, we get four basic colors: blue, green, yellow, and red. Seeing blue is what you experience when low-wavelength light excites the blue cones more than the green and red.

Seeing green happens when light excites the green cones more than the red cones. Seeing red happens when only the red cones are excited by high-wavelength light.

Here’s where it gets interesting. Seeing yellow is what happens when BOTH the green AND red cones are highly excited near their peak sensitivity. This is the biggest collective excitement that your cones ever have, aside from seeing pure white.

Notice that yellow occurs at peak intensity in the graph to the right. Further, the lens and cornea of the eye happen to block shorter wavelengths, reducing sensitivity to blue and violet light.

-

DNeg possibly charged with fraud

An Oscar-winning visual effects studio aiming for a £600 million stock market flotation has become entangled in an alleged scheme to defraud the taxman.

DNEG, which has worked on films such as No Time to Die and Captain Marvel, could have to pay HM Revenue & Customs more than £10 million in back taxes and penalties.

https://www.thetimes.co.uk/article/visual-effects-studio-reveals-tax-raid-vjb3pj8s3

COLLECTIONS

| Featured AI

| Design And Composition

| Explore posts

POPULAR SEARCHES

unreal | pipeline | virtual production | free | learn | photoshop | 360 | macro | google | nvidia | resolution | open source | hdri | real-time | photography basics | nuke

FEATURED POSTS

-

Ross Pettit on The Agile Manager – How tech firms went for prioritizing cash flow instead of talent

-

copypastecharacter.com – alphabets, special characters and symbols library

-

Rec-2020 – TVs new color gamut standard used by Dolby Vision?

-

Generative AI Glossary / AI Dictionary / AI Terminology

-

GretagMacbeth Color Checker Numeric Values and Middle Gray

-

Want to build a start up company that lasts? Think three-layer cake

-

NVidia – High-Fidelity 3D Mesh Generation at Scale with Meshtron

-

Photography basics: Shutter angle and shutter speed and motion blur

Social Links

DISCLAIMER – Links and images on this website may be protected by the respective owners’ copyright. All data submitted by users through this site shall be treated as freely available to share.